Jennifer Jones-Mitchell is CEO of HumanDrivenAI

Most guidance around generative engine optimization is borrowing the wrong playbook. It treats AI like search.

Optimize for a prompt.

Win the answer.

Get cited.

Move on.

That approach feels familiar to PR teams trained in SEO-era thinking. But it fundamentally misunderstands how people interact with AI systems and overlooks the single biggest factor determining whether brands remain visible inside large language models over time.

People don’t use AI the way they use Google.

They don’t ask one question, skim one result, and leave.

They ask a question, read the response, then ask another. And another. And another.

Through hands-on GEO testing across client brands, one pattern keeps surfacing. The real power of GEO is not winning the first response. It is being present in the second, third, and fourth prompts that follow.

I call this the conversation chain, and it is the most overlooked and most critical element of durable AI visibility.

For PR professionals, that insight should feel familiar. Because GEO is not about rankings. It is about narrative continuity.

Why search thinking breaks in AI

Traditional search behavior is transactional. Someone arrives with intent, scans results, clicks a link, and exits. Visibility is measured by rank, traffic and impressions.

AI behavior is conversational.

Users are not just consuming information. They are working through understanding. Each response shapes the next question. Context builds. Meaning evolves. Sources that continue to answer follow-up questions clearly and credibly become more influential inside the interaction.

This matters because AI systems do not reward one-off relevance. They reward ongoing usefulness.

If your brand answers the first question but disappears when the user asks why, how or what next, the model pivots to other sources. Once that happens, your voice and your framing are gone.

Here is the risk most communicators underestimate. If your brand exits the conversation early, you lose control of interpretation.

As models update, retrain and reweight sources, brands that only optimize for first responses are constantly losing ground. Everything you did to appear in that first prompt disappears post-update because the LLM does not see your total topic relevance.

Brands that structure content to support ongoing conversation are more likely to remain visible, even as models change. That is what makes them sticky inside AI systems.

What stickiness means for communicators

For PR teams, this has real consequences.

- Your messaging continues shaping how a topic is understood.

- Your framing persists beyond the initial explanation.

- Your brand stays present during decision-making moments.

- Your perspective survives model updates that reshuffle sources.

You do not stay visible in AI by being the loudest. You stay visible by being the most helpful across the conversation. That is a communications problem, not a technical one.

How PR teams should build GEO for the conversation chain

Winning GEO today requires shifting from prompt-level thinking to conversation-level strategy.

- Start by mapping the natural question progression.

- Identify the questions that follow once someone receives an answer.

- Focus on prompt progression from explanation to implication, comparison and action.

Create content that extends, not just explains. Content optimized for GEO should help AI move the conversation forward by including reasoning and next-step guidance.

Measure presence, not just appearance. A single AI mention is fleeting. The real metric is how long your brand remains relevant inside an interaction.

Durable GEO is about staying power, not spikes.

The overlooked GEO quick win: speakable schema

One of the fastest and most underused GEO improvements is metadata, specifically speakable schema.

Speakable schema was originally used to support the AI behind voice search. It’s a note you make in the metadata that tells AI systems exactly where the most direct, quotable answers live within your content. It helps models pull consistent language across conversations.

For PR teams, this matters because it improves answer accuracy, reinforces approved language, reduces risk of AI citing outdated information, increases reuse of your framing and supports voice and conversational interfaces.

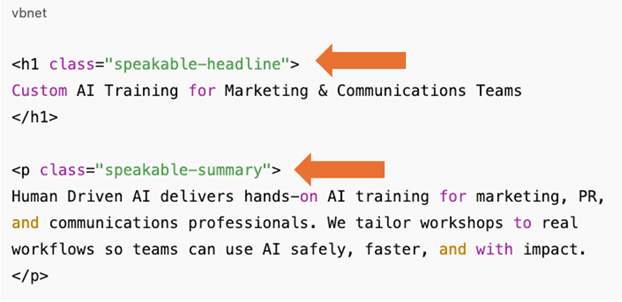

A simple example:

- A user prompts: “I’m looking for an AI training for my marketing team.”

- A brand offering AI training for marketing and communications professionals would want to surface in LLMs in response to that prompt.

- The brand can mark a two-sentence summary at the top of a service page so LLMs consistently return the same description of who the training is for, what outcomes it delivers, and how it works, rather than inventing a version from scattered copy.

Let me show you what I mean.

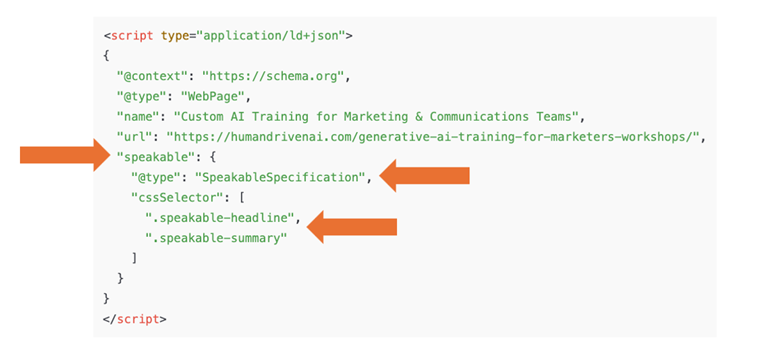

I added a speakable schema to the metadata on my AI training page ensuring the LLMs use my exact phrasing by directing them to it.

In the above image, I’ve used “speakable schema” to direct LLMs to the fastest, most direct answer to the user’s prompt inquiring about AI trainings for marketers.

Then, I used the speakable markup to reference the specific sections within my website the LLMs should see (CSS selectors).

This tells the LLMs:

- Where to find the fastest answer to the prompt

- The specific language to repeat in the response

This is not theoretical. It is a practical, low-lift way to guide AI toward the content designed to answer both initial and follow-up questions.

GEO that survives model updates

LLM updates will continue to disrupt which sources surface first.

Brands that optimize for the entire conversation chain do not rely on a single answer to stay visible. They build relevance into the interaction itself.

The goal is not to win the first response. The goal is to still be present when the conversation deepens.

In AI, visibility is earned turn by turn.

For PR professionals, that should feel less like disruption and more like a return to what communications has always done best, shaping understanding over time.